DSCC

info@displaysupplychain.com

FOR IMMEDIATE RELEASE: 09/19/2022

Measuring Light and Color on AR/VR Displays: Interview with Radiant Vision Systems

La Jolla, CA -

The second annual AR/VR Display Forum, is taking place this week (September 20-21). Hosted by DSCC, this virtual conference focuses on display technologies for augmented reality and virtual reality.

Radiant Vision Systems is a Platinum Sponsor and will present their innovative solution for testing displays found inside headsets and smart glasses. The company, which provides test and measurement solutions for displays and light sources, has been a part of Konica Minolta’s Sensing Business Unit since 2015.

In this interview, Eric Eisenberg, Optics Development Manager, talks about the unique challenges with AR/VR displays and why Radiant developed a new lens to measure these displays.

Radiant is well known in the display industry. Is there a system or product that has been particularly popular over the years?

Radiant’s solutions are all built on our fundamental testing platform: TrueTest™ Automated Visual Inspection Software. This software is a capability-rich test executive that integrates the operations of Radiant’s ProMetric® Imaging Colorimeters and Photometers—as well as other light measurement products—with the production line to enable automated inspection of consumer electronics. TrueTest is used for image processing, analysis, and data output, much like machine vision software. However, this software is specially designed to work with our photometric imaging systems to output quantitative values of light—for example, luminance and chromaticity.

The software offers advanced algorithms for registering unique illuminated regions (in other words, defining the area for measurement, which can sometimes be a complex shape). It also includes image processing techniques to mitigate image distortion, angular dependencies of light, and other effects. The platform is a very powerful tool because it not only obtains the measurements needed to qualify a device, but it can run tests against user-defined tolerances and output pass/fail values for several tests in rapid sequence. The software controls the test images displayed on a device (such as an AR/VR headset), triggers the camera functions, applies image processing & analysis, and outputs data. It even includes an API and SDK for further communication to external systems.

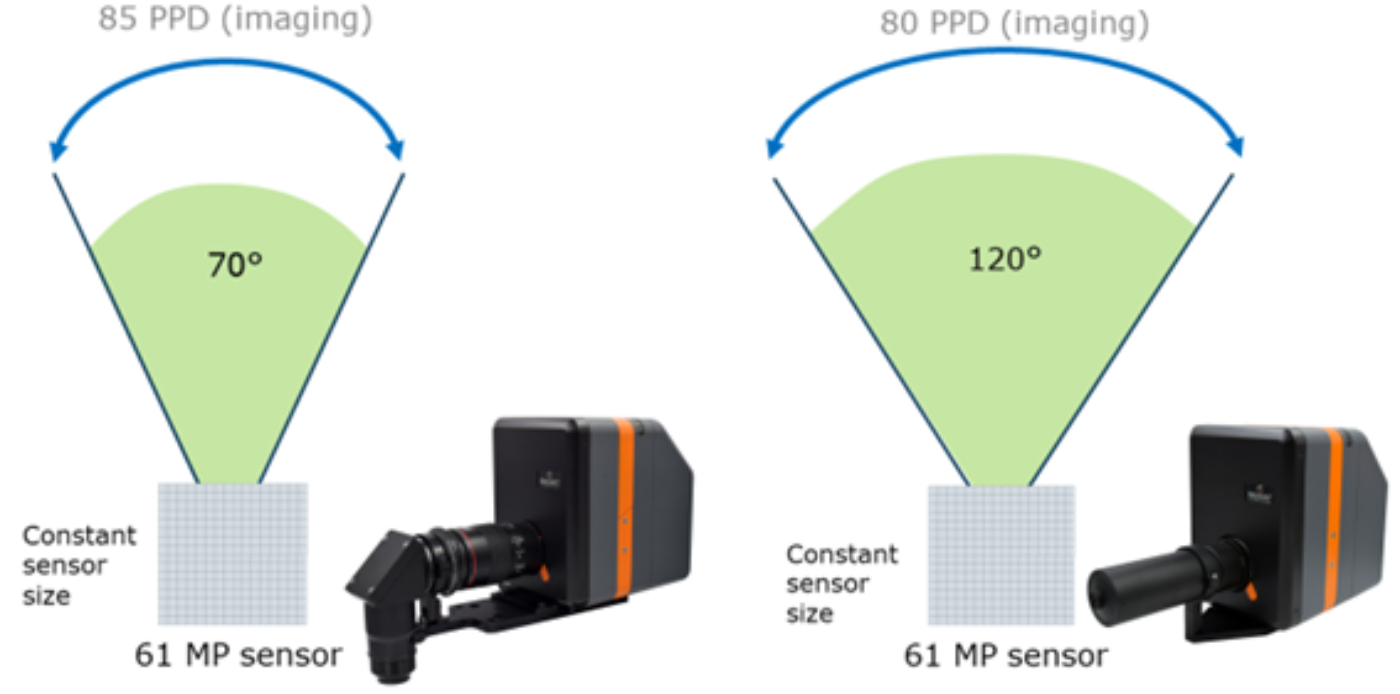

A ProMetric/TrueTest solution is one of the leading choices for display testing because the features offered are so advanced and capabilities are very broad. For example, Radiant uniquely offers imaging resolutions up to 61 megapixels (MP) to perform tests such as pixel-level measurement of displays, obtaining all subpixel output values in a single image. This greatly improves efficiency and data accuracy for display testing. Also, when it comes to meeting production manufacturing demands, there’s no real competition for a light measurement solution that can be applied for in-line pass/fail analysis and industrial communication, as is the case with TrueTest.

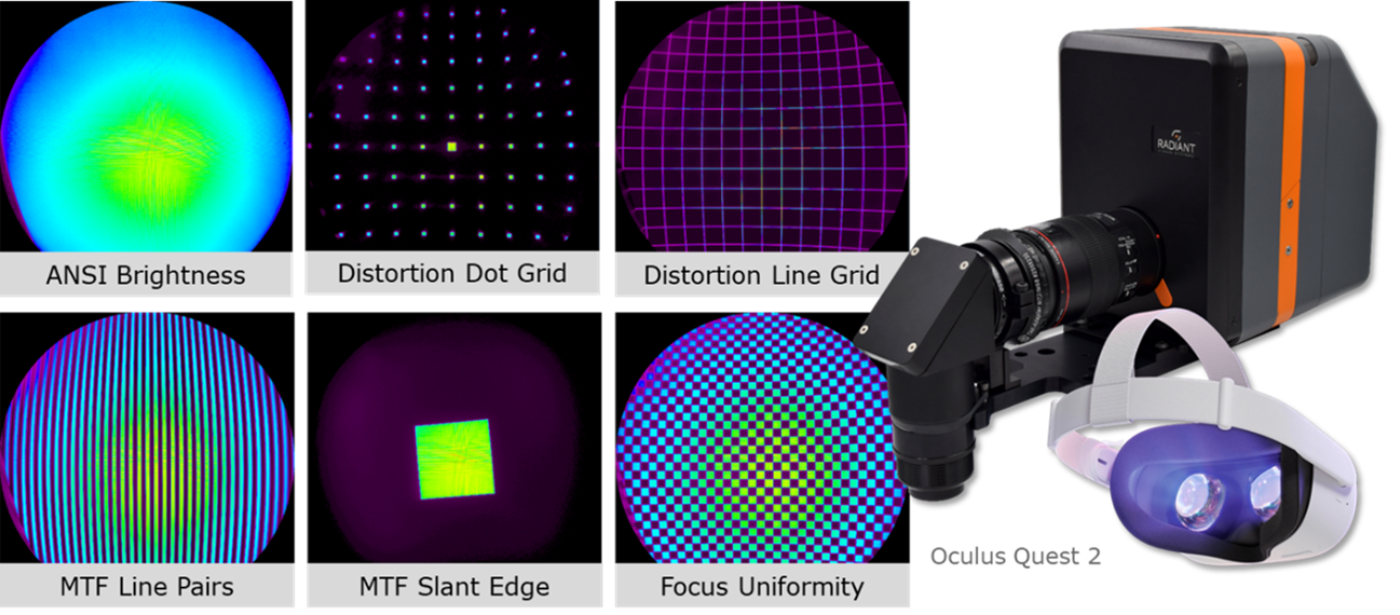

Lastly, we have so much flexibility in how we can apply the features of our solutions, Radiant frequently packages unique flavors of ProMetric and TrueTest together to solve specific applications. For example, any ProMetric camera can be paired with lenses designed for capturing images inside of AR/VR headsets, and we’ve packaged a suite of TrueTest capabilities specifically for testing the qualities of headset displays—called our TT-ARVR™ software module. It’s really exciting to offer this much diversity on a single, fundamental platform.

When did you start investigating AR/VR applications? Did you notice a shift in the industry?

Of course, AR/VR technology has long been exciting from an experimental stage, but commercialization and adoption of these systems didn’t take off until leading consumer device manufacturers stepped in around 5-10 years ago. At least as a concept, the Google Glass in 2014 spurred others to contemplate the viability of headsets for practical consumer use. In 2016 and 2017 there was a surge of new technology, including the Oculus Rift by (then) Facebook, the HTC Vive, PlayStation VR, and the first HoloLens from Microsoft.

It was around this same time that Radiant began rapidly developing our first solution for in-headset display testing. Our customers needed quality control solutions beyond the capabilities of standard imaging systems, lenses, and software—typically applied for flat panel displays (FPD).

The unique specifications and visualization parameters of a display intended for viewing at close range inside a headset required a different approach. To really understand the true quality of a display in this context, we knew we needed a system that could replicate the viewing conditions of the user. The complexity was finding a lens that could capture a wide field of view (FOV) of visual elements at close proximity to the display in the headset, just like the human eye does. But there wasn’t a commercially available lens capable of what we needed to do.

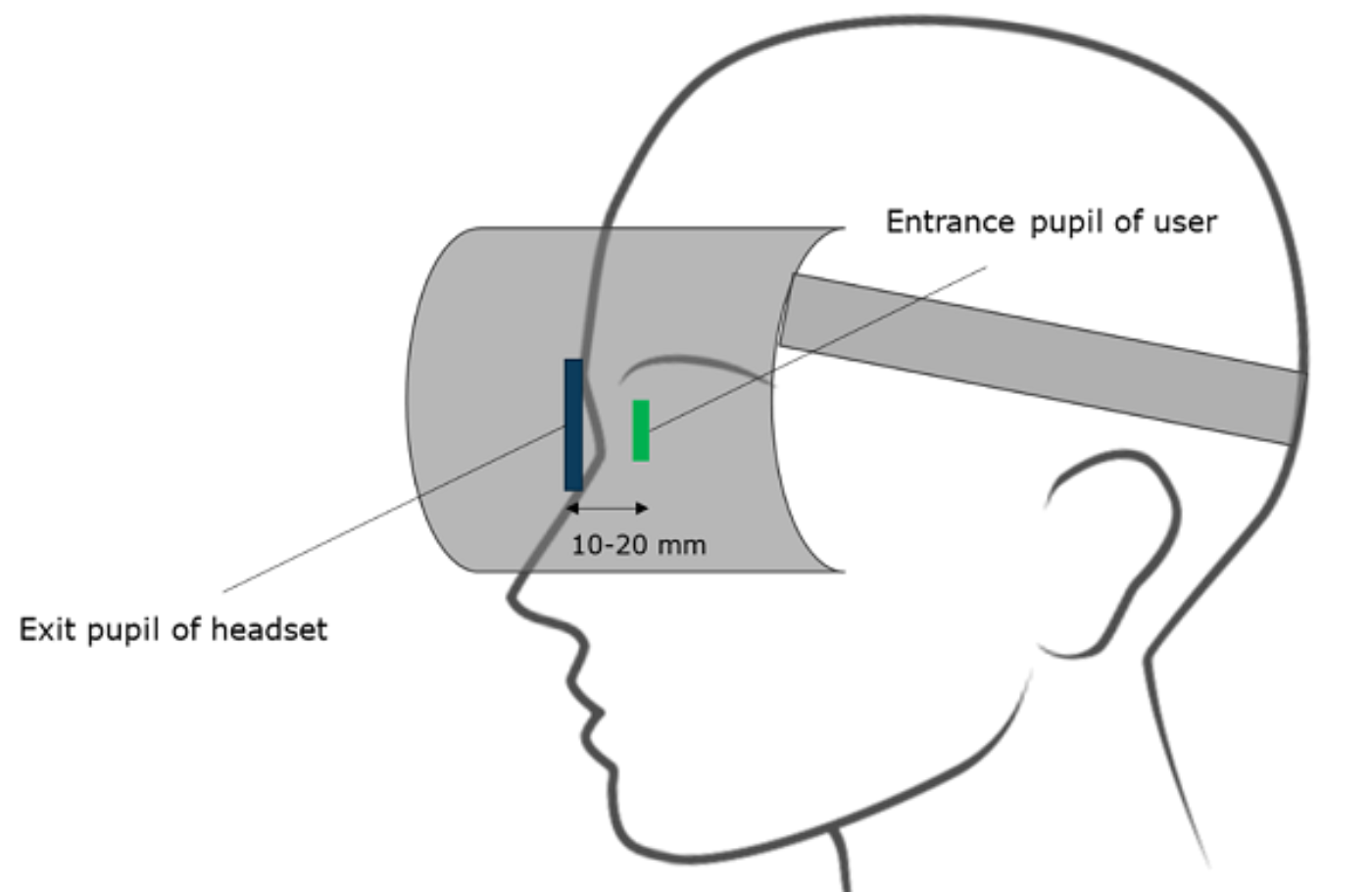

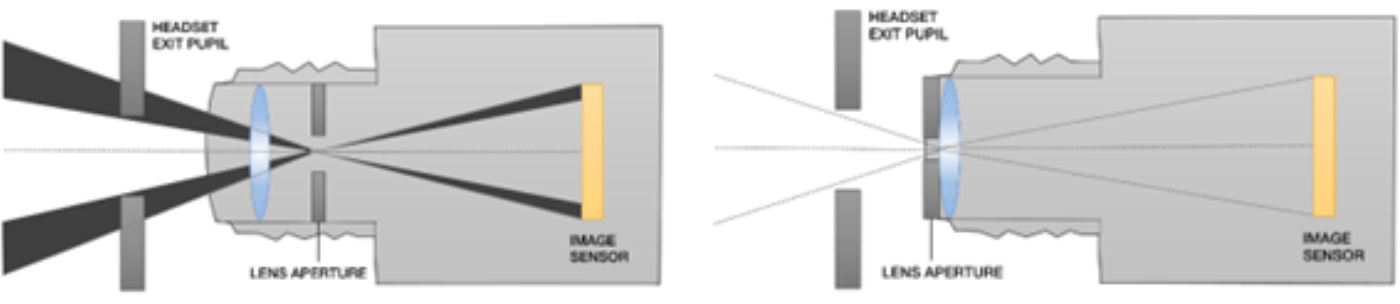

So, Radiant developed our own lens—which we aptly called the AR/VR Lens. The two hallmarks of the lens are its wide-FOV optics (which allow it to capture up to 120˚ full-angle horizontal FOV) and an aperture at the front of the lens, rather than embedded behind other optical components and housing. This aperture position is really important. When you look at the position of a standard lens aperture, you have all of this component structure up front that blocks the lens’s optical entrance pupil, which emulates the human pupil. The lens entrance pupil needs to be where the human eye would be in an AR/VR headset. By designing a lens with the aperture at the front, we’re now able to put the “eye” of our imaging system at the user’s eye position and see everything the user can.

How important is AR/VR for Radiant's growth now?

AR/VR is one of many industries that Radiant supports, all of which play a role in our development and our success. Like other consumer electronics industries, AR/VR is growing and Radiant has the opportunity to grow with it. The exciting thing about the AR/VR industry is that we are able to address measurement challenges by leveraging all of our existing imaging and software technology, using them as building blocks to develop new solutions and address new requirements. Testing AR/VR displays requires a slightly different solution for each unique headset. Not only does this provide a lot of opportunity for creative design by our optics development team, but we’ve also found ways to remain nimble and efficient using some very fundamental technology.

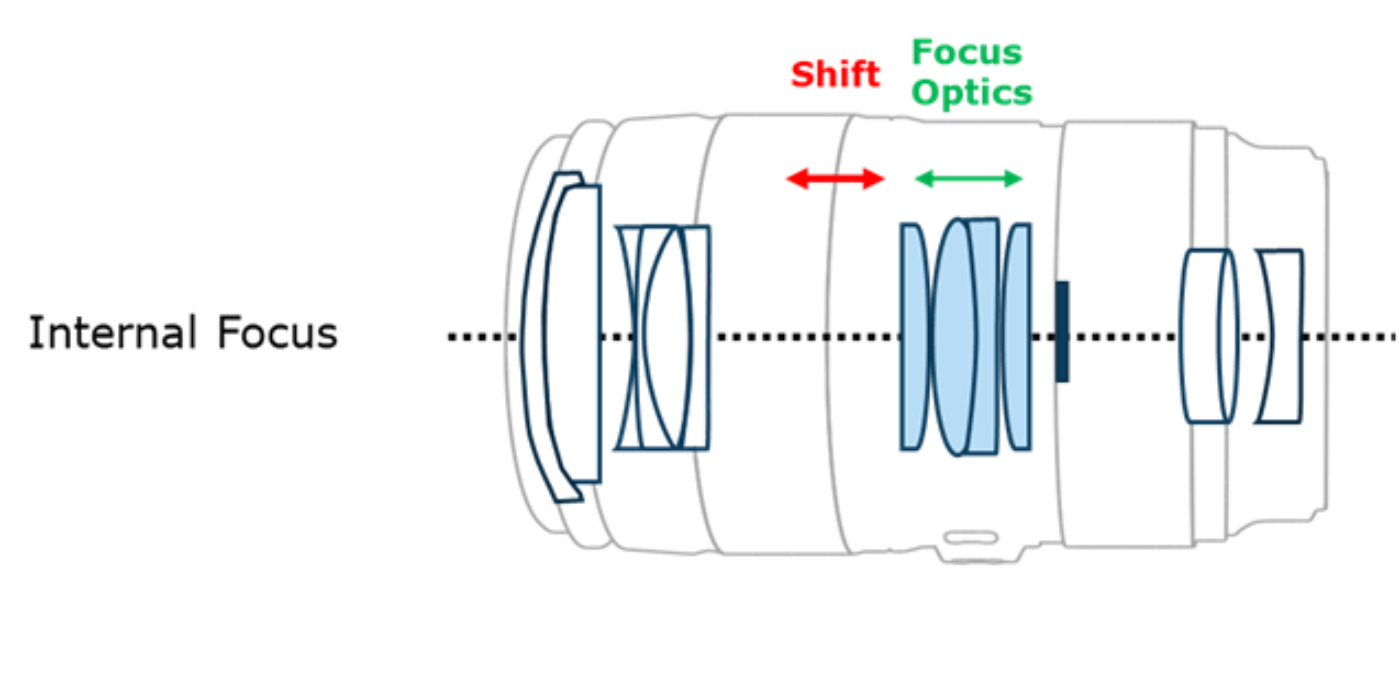

Our latest AR/VR display test solution speaks to how we’ve continued to adapt to meet changes in the AR/VR space. The XRE Lens is a product derived from a patent-pending invention that uses off-the-shelf components to produce a range of “custom” optical test system architectures without the time, cost, and complexity of a fully custom lens. This is unique to test systems on the market. Usually, manufacturers’ devices and specifications are so exclusive that a ground-up optical system needs to be designed for each test case (not to mention, these custom solutions are rarely flexible—any changes in the manufacturer’s requirements may require a complete test system redesign). Now, with the XRE Lens, we’ve reduced optical test system development cycles to a fraction of what they were. The solution is able to adapt quickly to changing requirements as the manufacturer’s specifications change.

In the future, I anticipate the diversity of AR/VR devices on the market will expand, and we will see even more realizations of the XRE Lens and other optical testing technologies derived from this innovation.

In a nutshell, what types of tests need to be performed?

In very simple terms, we test light and color. Qualities of light and color can be measured with our systems and then used to derive an understanding of any visual element of the display. A customer may want to measure the luminance (brightness of the display) and if the luminance is uniform across the display. Our systems also measure chromaticity (color) to test if the color output of the display matches expectations and has been produced correctly per the device specifications.

Depending on our customer requirements, we also look for things like pixel defects, particle defects, the sharpness or the focus of the display, the contrast, image sticking, warping and distortion of the rendered image’s aspect ratio—many different characteristics that are potentially undesirable. These could be defects that result during manufacturing processes that the manufacturer wants to eliminate from a finished product or (if possible) correct during the design and manufacturing phases to yield products with higher visual quality.

Essentially, our systems will detect any visual issue that a user would see on an illuminated display that might make them question the quality of the device or potentially drive a return of the product.

It's worth noting also that defects could originate at any step in the component architecture, from the display itself to the performance of any single optical element to the combined effect of the optical elements in the final assembly. In an ideal scenario, there’s testing at each component stage to help isolate the source of the defect so these issues can be resolved before they compound further down the line. Ultimately, manufacturers will be running a series of tests or a complete, automated test sequence for a range of display output states and visual qualities while viewing the display as a user would through the assembled headset architecture.

How are these tests different from the typical tests in the FPD display industry? Are there specific challenges for testing AR/VR displays?

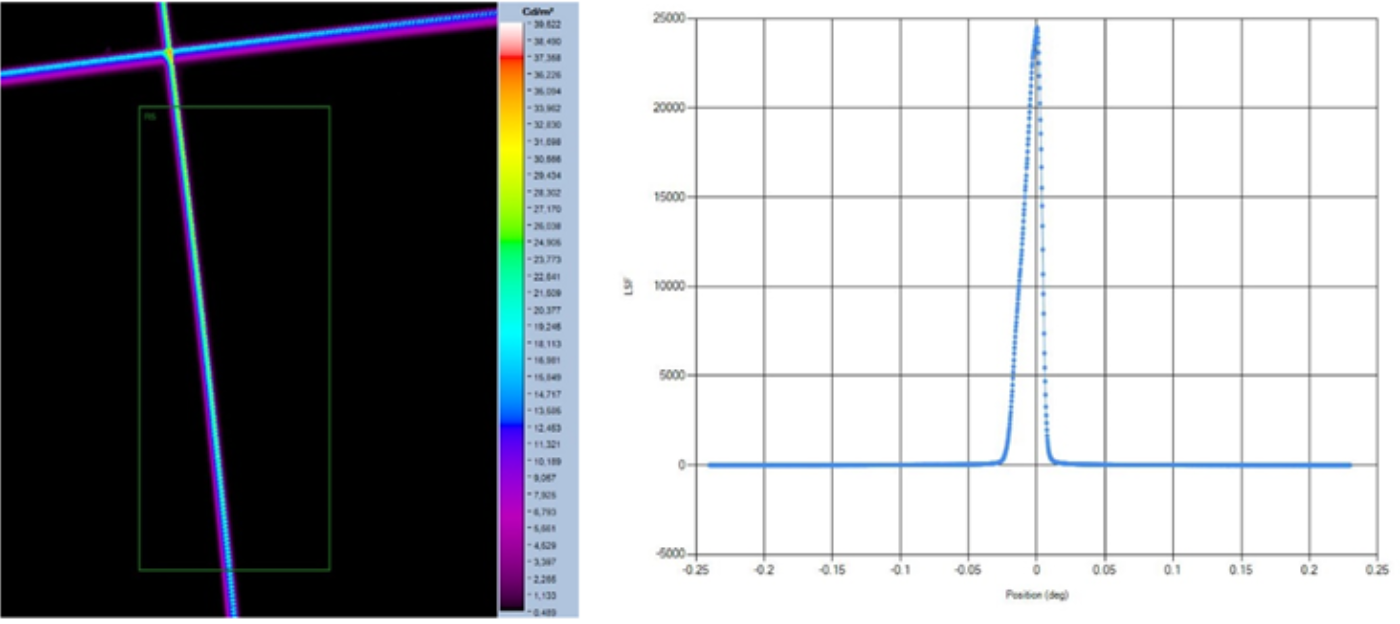

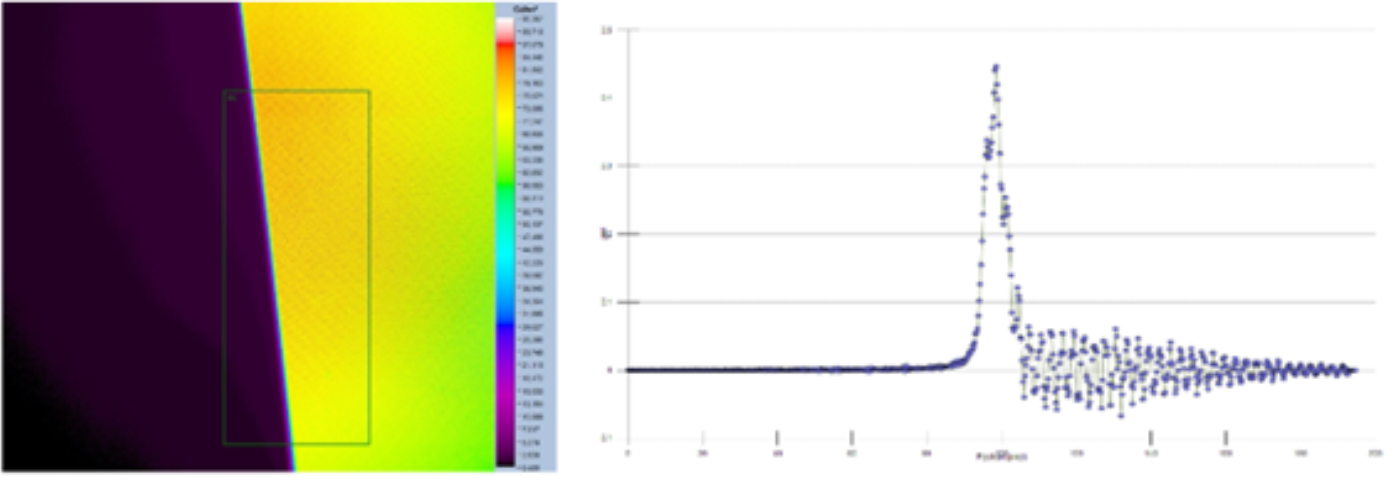

The major differences between a display in a smartphone or monitor and an AR/VR headset are essentially the viewing proximity and angular FOV. Testing a display at near-eye proximity means we need to account for things like the screen-door effect (when spaces between the projected display pixels become visible). This can impact tests that depend on measured spatial frequencies in the display—or measuring the changes between light and dark areas—to evaluate qualities such as sharpness and focus. Picking up the frequency between pixels can influence the accuracy of MTF (modulation transfer function) measurements that evaluate the AR/VR device’s optical performance.

The display’s image, once integrated into an AR/VR headset, is also virtual, which means that images are not viewed at one specific distance. We need to develop solutions capable of addressing virtual displays that present images at any optical distance specific to a particular device. What’s important for measurement, in this case, is addressing the challenge of ensuring sharp focus at any point on the virtual plane. This could be an unknown distance or a variable distance (in the case of varifocal headsets or tolerances in the relay optics inside the headset). Our electronically controlled focus in our XRE Lens makes this type of focus setting much more flexible and precise, so we can ensure displays are in shape focus when they are measured.

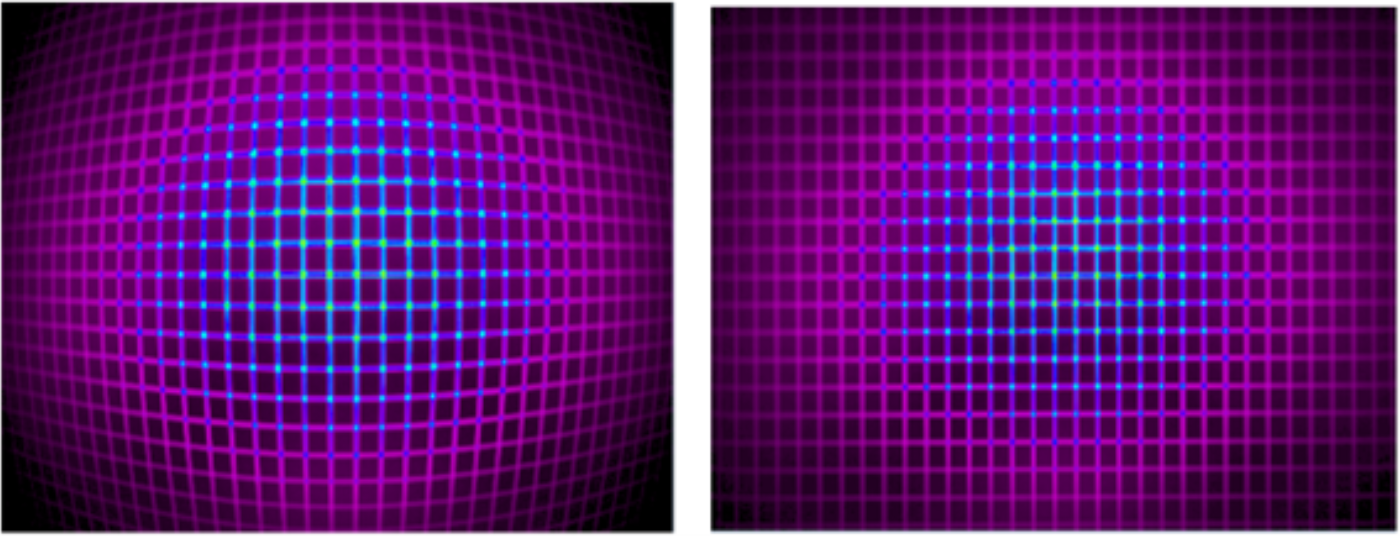

Another issue to address in AR/VR testing is distortion in images caused by capturing wide angular FOVs. Barrel distortion (sometimes referred to as a “fisheye” effect) is common in images captured using any wide-FOV lens. However, our software relies on an accurate spatial representation of the display—or rectilinear representation—to understand the coordinates of measured data points and apply analyses correctly. To account for this issue, Radiant’s AR/VR test systems apply a distortion calibration to images capture by our wide-FOV lenses, to ensure proper alignment of measurement coordinates with true coordinates of points within the area of the display.

Lastly, AR/VR displays can exhibit visual defects that traditional FPDs do not—for example, distortion of the image in the headset caused by the device’s optics, or poor sharpness at different focal settings of the device. In varifocal headsets or headsets with foveated optics, we often need to account for two or more focal points of the same display and apply analysis to each point or image distance to ensure accuracy at all depths. In the case where two displays are used (one per eye) to create a combined binocular image for the device user, we also may need to measure both eye positions and compare visual differences from eye to eye.

How did you design your optical system to meet these challenges? Does it depend on the type of display (LCD, OLED, etc...)?

The type of the display is actually not a huge factor when it comes to evaluating the visual quality within the AR/VR headset. Issues like the screen-door effect, for instance, have much more to do with the resolution and pixel density of the display than the technology used. Radiant has developed new analysis functions in our software to handle the effects of screen-door pixelation in images. For instance, our new line spread function MTF measurement method relies on frequency changes across a single line of illuminated pixels in the display, so the issue of pixel-to-pixel frequencies is eliminated.

System and software calibrations can ensure we capture images that are appropriately rectilinear for accurate analysis. Also, with our new XRE Lens, we can adapt for different focal points thanks to the system’s internal electronic focus. This is one of the advantages of the XRE Lens that give it flexibility to meet a range of measurement requirements. The lens can quickly adapt focus to virtual image distances ranging from 0.5 meters to infinity using software to set precise focal depths. Because the electronic focus is software-controlled, it can also be adjusted for particular settings as part of a fully automated visual inspection routine. This can be done without moving the system and without moving components, eliminating manual adjustment or positioning error—completely hands-free.

What has been the feedback from customers so far? Do you expect that future displays will be even more challenging to test?

We have had a really positive response from customers on our AR/VR Lens and XRE Lens solutions. What’s great is that these manufacturers continue to push the boundaries and provide us with valuable feedback that helps us push our optical designs even further. For each new measurement project, there’s something new we learn and a new feature we can add to our hardware or software development cycles. We’ve been very fortunate to work closely with many of our customers to learn how we can optimize our test system designs to meet specific needs, and how we can be even more adaptable so that they can easily meet their goals for quality and time to market.

Since we don’t foresee standardization across AR/VR devices happening any time soon, we expect we’ll be able to continue to develop solutions to address more headset diversity. Future AR/VR displays are definitely going to incorporate a greater number of unique specifications. We can already see where new pancake optics and other optical architectures are pairing with near-infrared eye tracking systems to enable more adaptive device focus, which will mean we need to continue to push the capability of our electronic focus.

FOVs also continue to expand, so we’re always looking at ways to capture an even broader angular area with our systems, while continuing to measure from the near-eye position. We need to provide as much imaging resolution as possible to ensure customers can continue to measure display output with solutions that best match the acuity of human vision.

Finally, do you have any advice for AR/VR manufacturers looking for a display test system?

We always underscore the importance of understanding the use case. Is this an R&D test system, where the requirement is to get very in-depth data to characterize how the AR/VR device performs? Or is this a test system that is intended to be sent to a contract manufacturer, for example, who will be performing outgoing quality control (OQC) on several AR/VR devices—where the expectation is that the test system runs around the clock inspecting thousands of devices or, in its lifetime, perhaps millions of devices?

It's really important that manufacturers aim for something that meets their needs with minimal cost and complexity. And, that they keep in mind how the technology is evolving at an extremely rapid rate. The last thing any manufacturer wants to do is get bogged down in some kind of lengthy development cycle or inspection project, which could cause them to be leap-frogged by the competition who may be able to get to market sooner.

In this industry, if a manufacturer waits too long to release a product—even if the design is a really good one—someone else may come up with something better in the meantime. The focus should be to get a new AR/VR device to market as quickly and practically as possible. This is why Radiant’s focus is on test systems that can be adapted and deployed in time with development needs.

The AR/VR Display Forum will take place on September 20-21. To watch the presentations from Radiant Vision System and other speakers, register at:

https://www.accelevents.com/e/arvrdisplayforum22?aff=pr

All attendees will be able to watch recordings of the presentations for 30 days after the event.

About DSCC

DSCC, a Counterpoint Research Company, is the leader in advanced display market research with offices across all the key manufacturing centers and markets of East Asia as well as the US and UK. It was formed by experienced display market analysts from across the display supply chain and delivers valuable insights, data and supply chain analyses on the display industry through consulting, syndicated reports and events. Its accurate and timely analyses help businesses navigate the complexities of the display supply chain.